Assignment 1 (Phase 1, Sprint 1)

Table of Contents

- 1. Introduction

- 2. Ticket #1: Implement a Basic Hash Table

- 3. Left: Test-Driven Approach

- 4. Right: Classical Approach

- 5. Ticket #2: Utility Functions

- 6. Ticket #3: More, More Basic, Data Structures

- 7. Ticket #4: Refactoring and Improvements

- 7.1. Step 12: Refactoring of Hash Table and Linked List

- 7.2. Step 13: Supporting Arbitrary Data as Elements, Keys and Values

- 7.2.1. Where Does

elem_tbelong? - 7.2.2. Pass Function Pointers to the List’s Constructor

- 7.2.3. Using Function Pointers to Operate on Elements

- 7.2.4. Update the Iterator

- 7.2.5. Update the Hash Table to Support Generic Data

- 7.2.6. Update

ioopm_hash_table_values()to Return a Linked List - 7.2.7. Finish This Step

- 7.2.1. Where Does

- 7.3. Step 14: Performance Testing

- 7.4. Step 15: Wrapping Up

Intended start: 2018-09-17

Soft deadline: 2018-10-05

Hard deadline: 2018-10-19

1 Introduction

This assignment serves several purposes:

- Demonstrate typical imperative programming idioms, and typical C idioms.

- Get you started in using programming tools – other than just

editors, compilers and debuggers. In particular, we will be

using a profiler (

gprof), a coverage tracker (gcov), and a memory leak detector (valgrind). And of course version control. - Create multiple opportunities to demonstrate mastery of achievements.

- Force you to code – reading and writing code are the only two techniques that work when learning how to program, and only if you do both.

Concretely, the assignment will have you develop a small library1 of data structures for C programs together with some minimal “driver programs” that use your data structures, and tests for your data structures.

Note that Assignment 2 will be built on-top of the data structures you develop now, and be a lot more free. Thus, it will pay off to do a good job now, as it will simplify the work of the next assignment.

By the end of this sprint you should have:

- Implemented a full-featured, generic hash table and linked list and an iterator for the latter.

- Demonstrated a suitable number of goals (make your own calculation based on your required velocity2).

- Started to get a feeling for what good code is, what bad code is, and where your code sits on that continuum.

- Have written about 1000 lines of code (LOC) plus another 1000 lines of test code. (This of course depends on your coding style. If you hit say 2000 LOC without counting tests, you probably need some help. Data point: Students on IOOPM who finished early wrote 1700–2500 LOC.)

1.1 Prerequisites to Start

- You should have finished the C boot-strapping exercises, which involves:

- Having familiarised yourself with the SIMPLE programming methodology, and understanding key concepts like dodging and cheating.

- Having familiarised yourself with Emacs, the editor we are using for at least the C part of the course.

- You should have found a partner to work with from your team.

- You should have gotten a GitHub repo from the course. Naturally, you are to place all files belonging to this assignment in the folder for Assignment 1.

- Make sure you have a working development environment for C.

Both 3. and 4. should trivially be the case if you are done with 1.

1.2 Organisation of this Document

Rather than simply giving you a list of things to do based on the

knowledge one has after having implemented similar things, the

assignment is going to have a story line. Thus, we will be making

judgment calls, backing out of decisions that turned out to be

sub-optimal, refactoring parts of the code as we develop better

ways of accomplishing tasks and want to back-port them to be used

everywhere, etc. etc. Because we are going to incrementally

evolve this code, make sure to use version control properly. At a

minimum, follow the instructions to check in at the end of each

step. That way, it will be much simpler to dig up old versions of

a function etc. if needed. If you find yourself making copies of

source files, like hash_table_step12.c, you are doing it

wrong. (Please ask for help if you don’t understand why!)

If you are already a good C programmer, you may be frustrated by the way this assignment progresses. In that case, I’d like to know when you are feedbacking to me at the end of this assignment. If you are a seasoned C programmer paired with a novice programmer, remember that the goal of the assignment is not to implement something – it is to learn something, and implementing is a means to this end.

Also, please – unless explicitly asked to – do not Google how to write X in C until you have read and tinkered with the explanations of this document. In general, please refrain from using Google and Stackoverflow (etc.) during this assignment – use Piazza instead.

The entire assignment is laid out as a series of steps with the intended velocity of 1-2 steps per weekday on average. Note that not all steps have equal length. Expect early steps to take longer (inverse proportional to your past coding experience perhaps?). If you want to plan ahead, read ahead and take notes of what is coming up. Early on, expect to be stuck on a compile error on a single line for an hour at times (ask for help if stuck on a compile error >20 minutes). Later on, expect to be mostly stuck debugging some behaviour in your program. Bottom-line: how long each step will take is hard to plan, and is going to be very individual.

1.3 Organisation of Files

If you are relatively new to programming, it may not be clear to you what the file structure could look like for this assignment. Here is an example of what your directory could look like when you are done with the entire assignment.

assig1/ # the directory containing all files ├── common.h # all common declarations ├── hash_table.c # hash table code ├── hash_table.h # hash table declarations ├── hash_table_tests.c # hash table tests ├── linked_list.c # linked list and iterator code ├── linked_list.h # linked list declarations ├── linked_list_tests.c # linked list tests ├── iterator.h # iterator declarations ├── Makefile # for compiling, testing, running └── README.md # report and build instructions (written by you)

2 Ticket #1: Implement a Basic Hash Table

The main work of this assignment will be done in the files

hash_table.h and hash_table.c. The former is the header file,

which declares functions and some necessary types. The latter

contains all struct definitions and function definitions. The .c

file will include the .h file using #include "hash_table.h".

To avoid multiple inclusion problems, the .h file will start

with #pragma once3.

#pragma once /** * @file hash_table.h * @author write both your names here * @date 1 Sep 2019 * @brief Simple hash table that maps integer keys to string values. * * Here typically goes a more extensive explanation of what the header * defines. Doxygens tags are words preceeded by either a backslash @\ * or by an at symbol @@. * * @see http://wrigstad.com/ioopm19/assignments/assignment1.html */ /// @brief Create a new hash table /// @return A new empty hash table ioopm_hash_table_t *ioopm_hash_table_create(); /// @brief Delete a hash table and free its memory /// param ht a hash table to be deleted void ioopm_hash_table_destroy(ioopm_hash_table_t *ht); /// @brief add key => value entry in hash table ht /// @param ht hash table operated upon /// @param key key to insert /// @param value value to insert void ioopm_hash_table_insert(ioopm_hash_table_t *ht, int key, char *value); /// @brief lookup value for key in hash table ht /// @param ht hash table operated upon /// @param key key to lookup /// @return the value mapped to by key (FIXME: incomplete) void *ioopm_hash_table_lookup(ioopm_hash_table_t *ht, int key); /// @brief remove any mapping from key to a value /// @param ht hash table operated upon /// @param key key to remove /// @return the value mapped to by key (FIXME: incomplete) char *ioopm_hash_table_remove(ioopm_hash_table_t *ht, int key);

%23pragma%20once%0A%0A%2F%2A%2A%0A%20%2A%20%40file%20hash_table.h%0A%20%2A%20%40author%20write%20both%20your%20names%20here%0A%20%2A%20%40date%201%20Sep%202019%0A%20%2A%20%40brief%20Simple%20hash%20table%20that%20maps%20integer%20keys%20to%20string%20values.%0A%20%2A%0A%20%2A%20Here%20typically%20goes%20a%20more%20extensive%20explanation%20of%20what%20the%20header%0A%20%2A%20defines.%20Doxygens%20tags%20are%20words%20preceeded%20by%20either%20a%20backslash%20%40%5C%0A%20%2A%20or%20by%20an%20at%20symbol%20%40%40.%0A%20%2A%0A%20%2A%20%40see%20http%3A%2F%2Fwrigstad.com%2Fioopm19%2Fassignments%2Fassignment1.html%0A%20%2A%2F%0A%0A%2F%2F%2F%20%40brief%20Create%20a%20new%20hash%20table%0A%2F%2F%2F%20%40return%20A%20new%20empty%20hash%20table%0Aioopm_hash_table_t%20%2Aioopm_hash_table_create%28%29%3B%0A%0A%2F%2F%2F%20%40brief%20Delete%20a%20hash%20table%20and%20free%20its%20memory%0A%2F%2F%2F%20param%20ht%20a%20hash%20table%20to%20be%20deleted%0Avoid%20ioopm_hash_table_destroy%28ioopm_hash_table_t%20%2Aht%29%3B%0A%0A%2F%2F%2F%20%40brief%20add%20key%20%3D%3E%20value%20entry%20in%20hash%20table%20ht%0A%2F%2F%2F%20%40param%20ht%20hash%20table%20operated%20upon%0A%2F%2F%2F%20%40param%20key%20key%20to%20insert%0A%2F%2F%2F%20%40param%20value%20value%20to%20insert%0Avoid%20ioopm_hash_table_insert%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%2C%20char%20%2Avalue%29%3B%0A%0A%2F%2F%2F%20%40brief%20lookup%20value%20for%20key%20in%20hash%20table%20ht%0A%2F%2F%2F%20%40param%20ht%20hash%20table%20operated%20upon%0A%2F%2F%2F%20%40param%20key%20key%20to%20lookup%0A%2F%2F%2F%20%40return%20the%20value%20mapped%20to%20by%20key%20%28FIXME%3A%20incomplete%29%0Avoid%20%2Aioopm_hash_table_lookup%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%29%3B%0A%0A%2F%2F%2F%20%40brief%20remove%20any%20mapping%20from%20key%20to%20a%20value%0A%2F%2F%2F%20%40param%20ht%20hash%20table%20operated%20upon%0A%2F%2F%2F%20%40param%20key%20key%20to%20remove%0A%2F%2F%2F%20%40return%20the%20value%20mapped%20to%20by%20key%20%28FIXME%3A%20incomplete%29%0Achar%20%2Aioopm_hash_table_remove%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%29%3B%0A

2.1 Recap: Hash Tables

A hash table data structure is a map from keys to values that uses the concept of hashing internally for performance. Many programming languages have support for maps, either as a library or built into the language itself. It is quite common that standard maps (aka dictionaries) are hash maps.

As the hashing concept is not important for our purposes here, we will not devote much time to how to write good hash functions. (Please put this on your backlog for after the course.)

Consider the following Python program that uses a map to keep track of quantities of merchandise in a warehouse:

# Starting a python interpreter $ python # Program as entered into an interactive prompt >>> # Create an empty map >>> quantities = {} >>> quantities['answers'] = 42 >>> quantities['perfumes'] = 4711 >>> quantities['primes_under_10'] = 4 >>> >>> # Look up the value for a specific key >>> quantities['answers'] 42 >>> >>> # Dump the contents of quantities (note the order) >>> quantities {'perfumes': 4711, 'primes_under_10': 4, 'answers': 42} >>> >>> # Get the keys of the maps >>> quantities.keys() ['perfumes', 'primes_under_10', 'answers'] >>> >>> # Delete the entry for a specific key >>> del quantities['answers'] >>> >>> # Print the contents of quantities >>> for k in quantities.keys(): ... print(k, "maps to", quantities[k]) ... perfumes maps to 4711 primes_under_10 maps to 4 # ctrl+d to exit back to command line $

%23%20Starting%20a%20python%20interpreter%0A%24%20python%0A%23%20Program%20as%20entered%20into%20an%20interactive%20prompt%0A%3E%3E%3E%20%23%20Create%20an%20empty%20map%0A%3E%3E%3E%20quantities%20%3D%20%7B%7D%0A%3E%3E%3E%20quantities%5B%27answers%27%5D%20%3D%2042%0A%3E%3E%3E%20quantities%5B%27perfumes%27%5D%20%3D%204711%0A%3E%3E%3E%20quantities%5B%27primes_under_10%27%5D%20%3D%204%0A%3E%3E%3E%0A%3E%3E%3E%20%23%20Look%20up%20the%20value%20for%20a%20specific%20key%0A%3E%3E%3E%20quantities%5B%27answers%27%5D%0A42%0A%3E%3E%3E%0A%3E%3E%3E%20%23%20Dump%20the%20contents%20of%20quantities%20%28note%20the%20order%29%0A%3E%3E%3E%20quantities%0A%7B%27perfumes%27%3A%204711%2C%20%27primes_under_10%27%3A%204%2C%20%27answers%27%3A%2042%7D%0A%3E%3E%3E%0A%3E%3E%3E%20%23%20Get%20the%20keys%20of%20the%20maps%0A%3E%3E%3E%20quantities.keys%28%29%0A%5B%27perfumes%27%2C%20%27primes_under_10%27%2C%20%27answers%27%5D%0A%3E%3E%3E%0A%3E%3E%3E%20%23%20Delete%20the%20entry%20for%20a%20specific%20key%0A%3E%3E%3E%20del%20quantities%5B%27answers%27%5D%0A%3E%3E%3E%0A%3E%3E%3E%20%23%20Print%20the%20contents%20of%20quantities%0A%3E%3E%3E%20for%20k%20in%20quantities.keys%28%29%3A%0A...%20%20%20%20%20print%28k%2C%20%22maps%20to%22%2C%20quantities%5Bk%5D%29%0A...%0Aperfumes%20maps%20to%204711%0Aprimes_under_10%20maps%20to%204%0A%23%20ctrl%2Bd%20to%20exit%20back%20to%20command%20line%0A%24%0A

We are going to build a hash table with the above features (and more) in a number of discrete steps. These steps are structured to tell a story where we incrementally arrive at a final program. There is no complete concise specification – you will have to work through this document bit by bit, because it discusses pros and cons, gets philosophical, etc. The point of this assignment is carrying it out, not having carried it out.

2.2 The Above Code in C

Below is a rough translation of the code above into C. Note that since C has no built-in dictionary, we must roll our own. Indeed, that is what this assignment is about. If you compile and run the program below, this is the output you will get:

== Dump the map == key: answers => value: 42 key: perfumes => value: 4711 key: primes_under_10 => value: 4 == Dump the keys == key: answers key: perfumes key: primes_under_10 == Delete the first entry == == Dump the map == key: perfumes => value: 4711 key: primes_under_10 => value: 4

Note that the program that you are going to read is quite awful for many reasons:

- The number of entries in the map is static – chosen at compile-time4).

- Even though we only use 3 entries, we pay for 10 with respect to memory consumption.

- We don’t yet use a hash, meaning that time complexity of searching and sorting is terrible (what is it?).

- There is no order of the entries (other than the order in which they were stored).

#include <stdio.h> #include <stdlib.h> /// Define a data structure for holding a key and a value struct entry { char *key; /// A string int value; }; /// make entry_t an alias for struct entry typedef struct entry entry_t; /// make map_t an alias for an array of 10 entry_t's typedef entry_t map_t[10]; /// The starting point of any C program int main(void) { /// Create an array or 10 elements, of which we are going to use 3 map_t map; int map_size = 3; /// Populate array just like in the Python example map[0] = (entry_t) { .key = "answers", .value = 42 }; map[1] = (entry_t) { .key = "perfumes", .value = 4711 }; map[2] = (entry_t) { .key = "primes_under_10", .value = 4 }; /// Print the contents of the map puts("== Dump the map =="); for (int i = 0; i < map_size; ++i) /// ++i means i = i + 1 { printf("key: %s => value: %d\n", map[i].key, map[i].value); } /// Print the keys puts("\n== Dump the keys =="); for (int i = 0; i < map_size; ++i) { printf("key: %s\n", map[i].key); } /// Delete the entry with index 0 puts("\n== Delete the first entry =="); for (int i = 1; i < map_size; ++i) { map[i-1] = map[i]; } map_size -= 1; /// means map_size = map_size - 1; /// Print the contents of the map again to see effect of change puts("\n== Dump the map =="); for (int i = 0; i < map_size; ++i) { printf("key: %s => value: %d\n", map[i].key, map[i].value); } return 0; }

%23include%20%3Cstdio.h%3E%0A%23include%20%3Cstdlib.h%3E%0A%0A%2F%2F%2F%20Define%20a%20data%20structure%20for%20holding%20a%20key%20and%20a%20value%0Astruct%20entry%0A%7B%0A%20%20char%20%2Akey%3B%20%2F%2F%2F%20A%20string%0A%20%20int%20value%3B%0A%7D%3B%0A%0A%2F%2F%2F%20make%20entry_t%20an%20alias%20for%20struct%20entry%0Atypedef%20struct%20entry%20entry_t%3B%0A%0A%2F%2F%2F%20make%20map_t%20an%20alias%20for%20an%20array%20of%2010%20entry_t%27s%0Atypedef%20entry_t%20map_t%5B10%5D%3B%0A%0A%2F%2F%2F%20The%20starting%20point%20of%20any%20C%20program%0Aint%20main%28void%29%0A%7B%0A%20%20%2F%2F%2F%20Create%20an%20array%20or%2010%20elements%2C%20of%20which%20we%20are%20going%20to%20use%203%0A%20%20map_t%20map%3B%0A%20%20int%20map_size%20%3D%203%3B%0A%0A%20%20%2F%2F%2F%20Populate%20array%20just%20like%20in%20the%20Python%20example%0A%20%20map%5B0%5D%20%3D%20%28entry_t%29%20%7B%20.key%20%3D%20%22answers%22%2C%20.value%20%3D%2042%20%7D%3B%0A%20%20map%5B1%5D%20%3D%20%28entry_t%29%20%7B%20.key%20%3D%20%22perfumes%22%2C%20.value%20%3D%204711%20%7D%3B%0A%20%20map%5B2%5D%20%3D%20%28entry_t%29%20%7B%20.key%20%3D%20%22primes_under_10%22%2C%20.value%20%3D%204%20%7D%3B%0A%0A%20%20%2F%2F%2F%20Print%20the%20contents%20of%20the%20map%0A%20%20puts%28%22%3D%3D%20Dump%20the%20map%20%3D%3D%22%29%3B%0A%20%20for%20%28int%20i%20%3D%200%3B%20i%20%3C%20map_size%3B%20%2B%2Bi%29%20%2F%2F%2F%20%2B%2Bi%20means%20i%20%3D%20i%20%2B%201%0A%20%20%20%20%7B%0A%20%20%20%20%20%20printf%28%22key%3A%20%25s%20%3D%3E%20value%3A%20%25d%5Cn%22%2C%20map%5Bi%5D.key%2C%20map%5Bi%5D.value%29%3B%0A%20%20%20%20%7D%0A%0A%20%20%2F%2F%2F%20Print%20the%20keys%0A%20%20puts%28%22%5Cn%3D%3D%20Dump%20the%20keys%20%3D%3D%22%29%3B%0A%20%20for%20%28int%20i%20%3D%200%3B%20i%20%3C%20map_size%3B%20%2B%2Bi%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20printf%28%22key%3A%20%25s%5Cn%22%2C%20map%5Bi%5D.key%29%3B%0A%20%20%20%20%7D%0A%0A%20%20%2F%2F%2F%20Delete%20the%20entry%20with%20index%200%0A%20%20puts%28%22%5Cn%3D%3D%20Delete%20the%20first%20entry%20%3D%3D%22%29%3B%0A%20%20for%20%28int%20i%20%3D%201%3B%20i%20%3C%20map_size%3B%20%2B%2Bi%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20map%5Bi-1%5D%20%3D%20map%5Bi%5D%3B%0A%20%20%20%20%7D%0A%20%20map_size%20-%3D%201%3B%20%2F%2F%2F%20means%20map_size%20%3D%20map_size%20-%201%3B%0A%0A%20%20%2F%2F%2F%20Print%20the%20contents%20of%20the%20map%20again%20to%20see%20effect%20of%20change%0A%20%20puts%28%22%5Cn%3D%3D%20Dump%20the%20map%20%3D%3D%22%29%3B%0A%20%20for%20%28int%20i%20%3D%200%3B%20i%20%3C%20map_size%3B%20%2B%2Bi%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20printf%28%22key%3A%20%25s%20%3D%3E%20value%3A%20%25d%5Cn%22%2C%20map%5Bi%5D.key%2C%20map%5Bi%5D.value%29%3B%0A%20%20%20%20%7D%0A%0A%20%20return%200%3B%0A%7D%0A

2.3 How Hash Tables Work Internally

There is a wealth of information on the Internet on how hash tables work. This is a good opportunity to use your “search fu”5. Below, I only include a minimal description.

As we saw above, a hash table maps a key to a value. In pseudo

code syntax, if M is a map, M[k] looks up the value for k in

M, and M[k] = v updates M so that subsequent lookup of k

returns v. Keys can be pretty much anything (we will look more

deeply into that in a bit). Here is an example where keys are

integers and values are strings:

#+ATTR_HTML: :copy-button t # Pseudo code (Python) M = {} # creates a new hash table M[0] = "foo" M[1] = "bar" M[2] = "baz" print(M[1]) # prints bar M[1] = "barbara" print(M[1]) # prints barbara

%23%2BATTR_HTML%3A%20%3Acopy-button%20t%0A%23%20Pseudo%20code%20%28Python%29%0AM%20%3D%20%7B%7D%20%23%20creates%20a%20new%20hash%20table%0AM%5B0%5D%20%3D%20%22foo%22%0AM%5B1%5D%20%3D%20%22bar%22%0AM%5B2%5D%20%3D%20%22baz%22%0Aprint%28M%5B1%5D%29%20%23%20prints%20bar%0AM%5B1%5D%20%3D%20%22barbara%22%0Aprint%28M%5B1%5D%29%20%23%20prints%20barbara%0A

We want to be able to support efficient insertion, lookup, and deletion of entries in the map (and eventually a bunch of other things). If we restrict ourselves to integer-values keys (like in the above example, i.e., 0, 1, 2, …) then we could easily represent the hash table as an array of values:

// C code char *M[3]; // array with three strings M[0] = "foo"; M[1] = "bar"; M[2] = "baz"; puts(M[1]); // prints bar M[1] = "barbara"; puts(M[1]); // prints barbara

%2F%2F%20C%20code%0Achar%20%2AM%5B3%5D%3B%20%2F%2F%20array%20with%20three%20strings%0AM%5B0%5D%20%3D%20%22foo%22%3B%0AM%5B1%5D%20%3D%20%22bar%22%3B%0AM%5B2%5D%20%3D%20%22baz%22%3B%0Aputs%28M%5B1%5D%29%3B%20%2F%2F%20prints%20bar%0AM%5B1%5D%20%3D%20%22barbara%22%3B%0Aputs%28M%5B1%5D%29%3B%20%2F%2F%20prints%20barbara%0A

Good news about this representation:

- It is very simple to understand and program for (this is great)

- Insertion and lookup are fast, and \(O(1)\)6

Bad news about this representation:

- Only handles integer keys (we knew that, but worth repeating, lest we forget!)

- Only works if we know the largest key value when the map is created

- Does not work well for a sparse representation (e.g., few keys of widely different values)

- Does not support deletion

The lack of support for deletion is actually rooted in a bigger problem – there is no way to represent an absence of a value in the array! Imagine for example that our values are integers too – then the array can only store integers, and unless we e.g., reserve a special number, say -42, to denote no value, a key will always map to something (some integer value). Whatever value we pick, we restrict the usage of our map in programs. In our (bad) hypothetical examples, a program that wants to store -42 as a value will not work with our map.

There are several ways around this. For example, we could store a

separate, equally sized array of booleans (true or false)

where the value true at index i means “there is an entry for

key i”, and false that there is no entry. Another example is

to change the array to hold both the value and a boolean that

controls whether the value is in the set or not. (Etc.) There are

pros and cons with either approach.

However, this points to another underlying problem, which is the same as the bad fit for sparse representation mentioned above. For example, imagine that we in addition to the keys 0, 1 and 2 above also ended up using the key 1.000. This requires an array that reserves space for storage of at least 1.001 elements (not 1.000 – can you see why?) but only uses 4 (less than 1%) of the storage. (While that is wasteful, it may be OK for a large class of programs if the greatest key is relatively small (like 1.000), and we only have a small number of maps. However, for reasons we will see later, as we lift the restriction that keys are integers, this may not be the case.)

The last problem is related to the fact that the array representation only works if we know the largest key value when the map is created. This problem can be solved by creating a larger array when keys are used that do not fit in the current array. A similar scheme can be used to (possibly) move to a smaller array on deletion.

It is possible to solve all of the problems above by changing the representation (meaning how the abstract notion of a hash table mapping keys to values is stored in memory) so that:

- Each index in the array covers a dynamic number of keys7 instead of a single key; and

- Each value in the array is a sequence of (key,value) pairs so that each entry in the map is represented by a corresponding (key,value) pair (that we will call an entry).

In that representation, then index 0 in the array could (for

example) cover all keys in the set {0, 15, 31, 47, ...}. Looking

up key 47 of the map M means reading M[0] to access a sequence

of (key,value) pairs in which we can search for a pair whose key

is 47. If no such pair exists, then there is no entry for the key

47 in the map. Adding an entry for key 47 means adding a

(key,value) pair to a sequence, possibly updating or replacing a

previous entry. In typical hash table parlance, we talk about an

array of buckets, each with zero or more entries.

To avoid having to know the range of keys in use for a particular

program, we use modulo (C syntax: %). So, when inserting a key

k in a hash table with b buckets, the new entry goes into

bucket k modulo b (k % b).

Let us quickly analyse how this representation fares with respect to the good and bad news from above:

- Still very simple to understand and program for

- Insertion and lookup are still fast – the array let’s us jump to the right bucket in \(O(1)\) time, after which we can search in the bucket (typically \(O(n)\)8, where \(n\) is the number of entries in the bucket – typically a quite small number)

- It handles sparse representations well because it only stores data (in the form of pairs) for the entries in the map (as opposed to all possible entries as before).

- For the same reason, it trivially handles deletion and the absence of an entry for a key.

- The same strategy for growing and shrinking the array to not need a priori knowledge about the possible keys can be applied. Additionally, we can also adapt by changing the number of buckets (this involves moving entries across buckets).

Now, what remains is to lift the restriction that only integers can be used as keys. This is where the hash function comes in, from which the hash table derives its name. Glossing over many details that you will have to look up (but feel free to do that after the course), a hash function is a function that given a datum returns an integer – a hash code. The hash code is usually some kind of semi-unique “fingerprint” of a datum, so that the hash code for similar data still have different hash codes. We use the hash code internally to select the bucket for a key (in \(O(1)\) time).

Development of hash functions is super interesting, quite subtle and out of scope for this course.

For concreteness, here is a very basic hash function for hashing a string which produces an integer from a string by calculating the sum of all ASCII values of all the characters.

unsigned long string_sum_hash(const char *str) { unsigned long result = 0; do { result += *str; /// *str is the ASCII value of } while (*++str != '\0'); return result; }

unsigned%20long%20string_sum_hash%28const%20char%20%2Astr%29%0A%7B%0A%20%20unsigned%20long%20result%20%3D%200%3B%0A%20%20do%0A%20%20%20%20%7B%0A%20%20%20%20%20%20result%20%2B%3D%20%2Astr%3B%20%2F%2F%2F%20%2Astr%20is%20the%20ASCII%20value%20of%0A%20%20%20%20%7D%0A%20%20while%20%28%2A%2B%2Bstr%20%21%3D%20%27%5C0%27%29%3B%0A%20%20return%20result%3B%0A%7D%0A

(Later in this document, we will see at least one better hash function implementation.)

Using non-integer keys in a hash table thus requires the existence of a function that for a given value in the key domain returns an integer (and always the same value for the same key). Typically, we hide this to the users, except for the fact that we ask them to provide such a function that we subsequently use internally.

For now, we will dodge and consider only lists that store integers. This seems like a good simplification, and innocuous too – we know how to undo it later by adding a hash function.

2.4 Hash Table Representation

From our above discussion, our hash table will be represented as an array of buckets, and each bucket will hold a sequence of entries. In addition to that, we will hold some auxiliary data like the size of the array, etc. The auxiliary data will grow as we make our hash table increasingly fancy. The sequence of entries will not be another array, but a linked structure, implemented by letting each entry know the location in memory of the next entry (using a pointer). Using an array is of course possible, and comes with all of the pros and cons from above9. For the rest of this assignment, we will stick with a linked structure.

Following the SIMPLE methodology, we are going to dodge and simplify the specification. Our first hash table implementation will only support integer keys and string values, and only support a fixed number of buckets (17). This is enough to write some useful tests, to weed out early bugs. Note that while the number of buckets is fixed, each bucket can have an “unbounded” number of entries.

Always make a note of dodges and cheats so that you can revisit

them and “fix them” later. Without such a log, it is easy to

forget to e.g. undo a simplification later. You can mark this in

your code e.g., /// DODGE: ..., /// CHEAT: ..., etc. like the

/// FIXME: ..., and /// TODO: ... that is used in code on

this page.

Tip: the fixmee Emacs package allows you to quickly jump

between FIXME, TODO etc. tags in code, or list all such tags

and navigate to them. If you use the course’s Emacs settings, it

also understands CHEAT and DODGE.

Below are the initial definitions of the structs we will be using.

We will be revising these several times as we go. Remember that

typedef creates a type alias. Also note that we prefix the

hash table type with ioopm_. This will be a naming convention

for all public types on this course, meaning, you will use this

naming scheme for things in your code! The entry_t type is only

used internally by the hash table, so it will not get a public

name.

typedef struct entry entry_t; typedef struct hash_table ioopm_hash_table_t; struct entry { int key; // holds the key char *value; // holds the value entry_t *next; // points to the next entry (possibly NULL) }; struct hash_table { entry_t *buckets[17]; };

typedef%20struct%20entry%20entry_t%3B%0Atypedef%20struct%20hash_table%20ioopm_hash_table_t%3B%0A%0Astruct%20entry%0A%7B%0A%20%20int%20key%3B%20%20%20%20%20%20%20%2F%2F%20holds%20the%20key%0A%20%20char%20%2Avalue%3B%20%20%20%2F%2F%20holds%20the%20value%0A%20%20entry_t%20%2Anext%3B%20%2F%2F%20points%20to%20the%20next%20entry%20%28possibly%20NULL%29%0A%7D%3B%0A%0Astruct%20hash_table%0A%7B%0A%20%20entry_t%20%2Abuckets%5B17%5D%3B%0A%7D%3B%0A

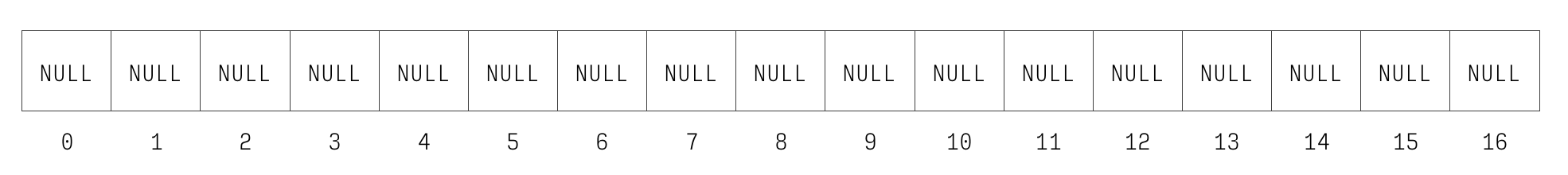

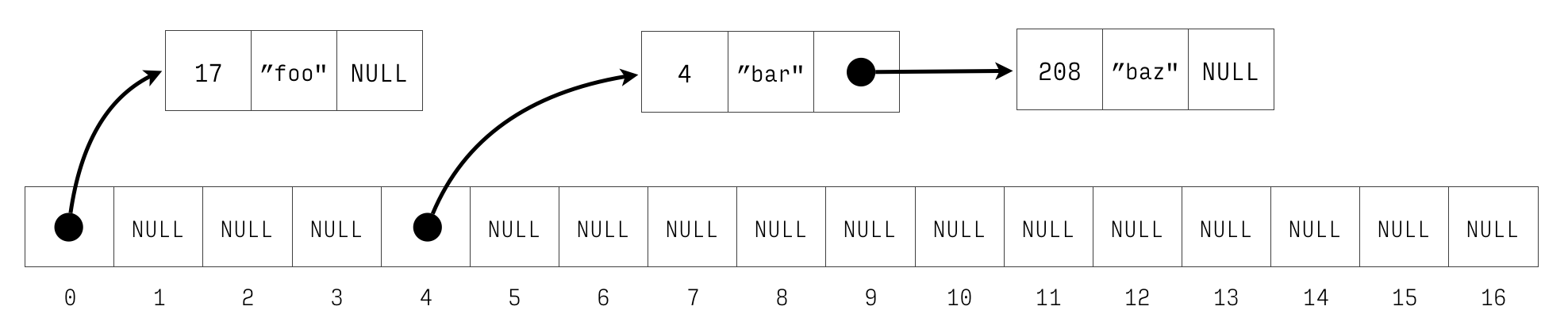

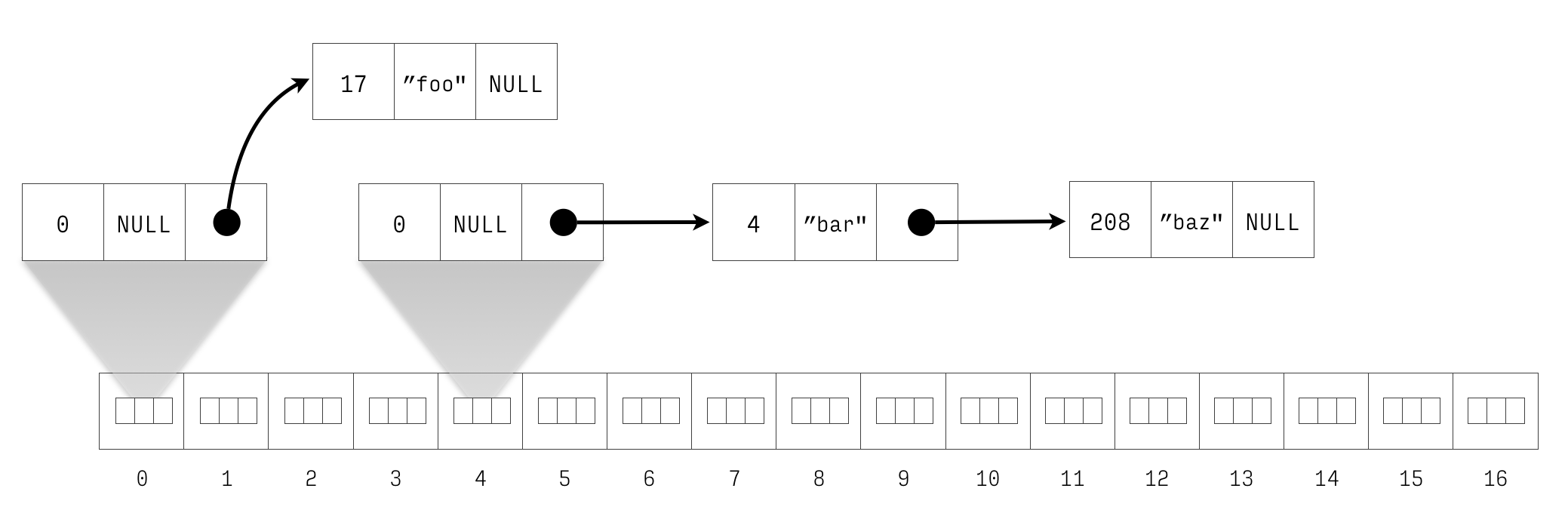

The figure 1 and Figure 2 show the hash table newly

initialised and with three entries respectively. ADDR means a

non-NULL pointer pointing to the datum on the right.

Figure 1: Newly initialised hash table.

Figure 2: Hash table with keys 4 \(\mapsto\) bar, 17 \(\mapsto\) foo, and 208 \(\mapsto\) “baz”.

2.4.1 Placement of struct hash_table and struct entry etc.

The placement of structs is important. We want data structures to be opaque, meaning it should not be possible for a client to know how they are implemented. This preserves maximal freedom for us to change the implementation as we continue to improve our code, and also helps us protect the invariants of the data structure.

Structs such as struct hash_table and struct entry are

internal implementation details and as such should be in the

hash_table.c file, not the .h file. Structs like option (if

you implemented it) needs to go into the .h file, as a user

must be able to look inside the struct to search values.

Placement inside the .c file has impact on the testing instead

– how will tests be able to access the structs?

Place the structs in the right places, and know your motivation for what you chose.

This is a good time to start thinking about Achievement A3.

2.4.2 Using Debuggers

Once you are done with this bit, you know enough gdb to debug

your own problems in the subsequent steps. If and when you have

a tricky situation to debug, after you solved it, you should

definitely use that to demonstrate Achievement R52.

Debuggers like gdb and lldb are great tools for understanding

programs and fixing bugs. You need to familiarise yourself with a

debugger (say, gdb) as soon as possible so that you are never

afraid to use it later. You should learn the basics before

hitting a bug, so you don’t need to struggle both with learning

how to navigate gdb and using it to find the bug at the same

time.

So, in that spirit, let us spend a few minutes in gdb to explore

the hash table representation of above. Below is a program that

(on the stack for simplicity) creates a hash table. Compile this

program like so: gcc -pedantic -Wall -g gdb-test.c (read about

what the flags mean here). This produces a file a.out that you

can run through gdb. Note that compiling this program will

produce a warning that you’re declaring a variable ht that you

are not using. We can live with this warning for now – we are

going to use ht through the debugger.

#include <stdlib.h> /// the types from above typedef struct entry entry_t; typedef struct hash_table ioopm_hash_table_t; struct entry { int key; // holds the key char *value; // holds the value entry_t *next; // points to the next entry (possibly NULL) }; struct hash_table { entry_t *buckets[17]; }; int main(int argc, char *argv[]) { entry_t a = { .key = 1, .value = argv[1] }; entry_t b = { .key = 2, .value = argv[2], .next = &a }; entry_t c = { .key = 3, .value = argv[3], .next = &b }; entry_t d = { .key = 4, .value = argv[4] }; entry_t e = { .key = 5, .value = argv[5] }; entry_t f = { .key = 6, .value = argv[6], .next = &e }; ioopm_hash_table_t ht = { .buckets = { 0 } }; ht.buckets[3] = &c; ht.buckets[8] = &d; ht.buckets[15] = &f; return 0; }

%23include%20%3Cstdlib.h%3E%0A%0A%2F%2F%2F%20the%20types%20from%20above%0Atypedef%20struct%20entry%20entry_t%3B%0Atypedef%20struct%20hash_table%20ioopm_hash_table_t%3B%0A%0Astruct%20entry%0A%7B%0A%20%20int%20key%3B%20%20%20%20%20%20%20%2F%2F%20holds%20the%20key%0A%20%20char%20%2Avalue%3B%20%20%20%2F%2F%20holds%20the%20value%0A%20%20entry_t%20%2Anext%3B%20%2F%2F%20points%20to%20the%20next%20entry%20%28possibly%20NULL%29%0A%7D%3B%0A%0Astruct%20hash_table%0A%7B%0A%20%20entry_t%20%2Abuckets%5B17%5D%3B%0A%7D%3B%0A%0Aint%20main%28int%20argc%2C%20char%20%2Aargv%5B%5D%29%0A%7B%0A%20%20entry_t%20a%20%3D%20%7B%20.key%20%3D%201%2C%20.value%20%3D%20argv%5B1%5D%20%7D%3B%0A%20%20entry_t%20b%20%3D%20%7B%20.key%20%3D%202%2C%20.value%20%3D%20argv%5B2%5D%2C%20.next%20%3D%20%26a%20%7D%3B%0A%20%20entry_t%20c%20%3D%20%7B%20.key%20%3D%203%2C%20.value%20%3D%20argv%5B3%5D%2C%20.next%20%3D%20%26b%20%7D%3B%0A%20%20entry_t%20d%20%3D%20%7B%20.key%20%3D%204%2C%20.value%20%3D%20argv%5B4%5D%20%7D%3B%0A%20%20entry_t%20e%20%3D%20%7B%20.key%20%3D%205%2C%20.value%20%3D%20argv%5B5%5D%20%7D%3B%0A%20%20entry_t%20f%20%3D%20%7B%20.key%20%3D%206%2C%20.value%20%3D%20argv%5B6%5D%2C%20.next%20%3D%20%26e%20%7D%3B%0A%20%20ioopm_hash_table_t%20ht%20%3D%20%7B%20.buckets%20%3D%20%7B%200%20%7D%20%7D%3B%0A%20%20ht.buckets%5B3%5D%20%3D%20%26c%3B%0A%20%20ht.buckets%5B8%5D%20%3D%20%26d%3B%0A%20%20ht.buckets%5B15%5D%20%3D%20%26f%3B%0A%0A%20%20return%200%3B%0A%7D%0A

We are now going to run this program in gdb:

gdb ./a.outStartgdbwitha.outbeing the program we are running.r one two three four five sixRun the program. This will finish nicely and print something like “[Inferior 1 (process 17723) exited normally]”. The “one … six” are the command-line arguments to the program meaningargv[1]will be “one”, etc.b mainSet a breakpoint at the start of themain()function causing the program to stop and return control togdbwhen we hit this point in the program.r one two three four five sixStart the program again. This time, the program will be paused immediately at the start.listPrint the source code of the current line and surrounding lines.n(repeat this until you reach line 27, aka line 7 inmain()) Steps the program further, one step at the time. You can see what line is being executed.p aPrint the contents of theavariable.n(repeat until you reach line 30, aka line 10 inmain())p ht.buckets[3]->next->next->keyGo to the fourth element inbucketsinht, and follow thenextpointer, twice, and print the key in the corresponding entry.cGo back to executing the program until finish, or we hit another breakpoint. This will finish the program again.

In addition to n (next), there is also s (step), which steps

into an operation. For example, if the debugger is positioned on

the line x = foo(), n will run foo() and store the returned

value in x, whereas s will start foo() and immediately break

at its first line, allowing you to step through foo() as well.

The gdb (and lldb) debuggers are great! For example if you

run your program through gdb and you experience a crash, gdb

will allow you to print a backtrace (using the bt command)

that shows you the path in the program to the place where the

error occurred. Here is an example of what this might look like in

gdb.

Reading symbols from a.out...done.

(gdb) r

Starting program: /home/stw/t/ioopm/2018/hash/a.out

a.out: driver.c:74: main: Assertion `ioopm_hash_table_has_key(h, nev, str_equality) == true' failed.

Program received signal SIGABRT, Aborted.

__GI_raise (sig=sig@entry=6) at ../sysdeps/unix/sysv/linux/raise.c:51

51 ../sysdeps/unix/sysv/linux/raise.c: No such file or directory.

(gdb) bt

#0 __GI_raise (sig=sig@entry=6) at ../sysdeps/unix/sysv/linux/raise.c:51

#1 0x00007ffff7a2df5d in __GI_abort () at abort.c:90

#2 0x00007ffff7a23f17 in __assert_fail_base (fmt=<optimized out>,

assertion=assertion@entry=0x555555555f40 "ioopm_hash_table_has_key(h, nev, str_equality) == true", file=file@entry=0x555555555e89 "driver.c", line=line@entry=74,

function=function@entry=0x555555556076 <__PRETTY_FUNCTION__.3061> "main") at assert.c:92

#3 0x00007ffff7a23fc2 in __GI___assert_fail (

assertion=0x555555555f40 "ioopm_hash_table_has_key(h, nev, str_equality) == true",

file=0x555555555e89 "driver.c", line=74,

function=0x555555556076 <__PRETTY_FUNCTION__.3061> "main") at assert.c:101

#4 0x0000555555555739 in main (argc=1, argv=0x7fffffffda58) at driver.c:74

This is the very very bare minimum of gdb that you need to know,

but you should know that there is lots more. You can even travel

backwards in time and step your program backwards.

One trick that has worked very well for me is to

“secretly” run programs through gdb so that if they crash,

they produce a backtrace: gdb -batch -ex "run" -ex "bt" --args

./a.out one two three four five six.

(For lldb, you can use this which is almost the same: lldb -b -o "run" -k "bt" -k "quit".)

Because this is a bit dull to write, I have an alias set up for

this in my .bashrc file (actually .zshrc because I run zsh):

alias bt='gdb -batch -ex "run" -ex "bt" --args'. This allows me

to write bt ./a.out one two three four five six. and get a nice

backtrace10 in the event of a segfault.

2.5 A Fork in The Road

You are standing on a dirt road. A little further up ahead, there is a fork, that splits the road in two directions: one leading left and one leading right. To go left, scroll down to Section 3. To go right, scroll down to Section 4. (But not until you have read the next two paragraphs)

We are finally about to start implementing the hash table. These instructions offer you two ways forward. One is the test-driven approach in which we start by first defining a success criteria for the next step, write that down in the form of a test, and then proceed to write the actual implementation, using the test to check when we are done. The other approach is the classical approach, in which we first write the implementation, and then write the tests to verify that the implementation is correct.

Regardless of the approach, we will end up with an implementation of some feature and at least one test for the implementation. The amount of work is the same. Pick whatever approach you like, as long as you try both – seriously – during the course.

(Now you’ve read the two paragraphs! Go left or right?)

3 Left: Test-Driven Approach

Test-driven development is based on the idea that tests should be written before the code they are testing, and thus serve as a definition of correctness for that code. By starting with the tests, we are forced to decide what are valid behaviours and what is the semantics of the function before we start coding it up, which is very nice.

The test-driven approach will thus work very much like the classical approach, but change in two important ways:

- For each step, we start by defining the success criteria of that step, expressed as a test – and equate finishing the step with the passing of the test.

- Because we are test-driven, the order in which we implement things might sometimes change because in order to test something, we usually need ways of inspecting it, or at least getting some data for it.

A possible order of basic features for a hash table is this:

- Create a new, empty hash table

- Destroy a hash table and free its used memory

- Lookup the value for a given key

- Insert a new key–value mapping in a hash table

- Remove a key–value mapping from a hash table

3.1 Features 1 & 2: Create and Destroy

Start by writing a stub for the functions under test so we can compile and run a (failing) test:

ioopm_hash_table_t *ioopm_hash_table_create() void ioopm_hash_table_destroy(ioopm_hash_table_t *ht)

ioopm_hash_table_t%20%2Aioopm_hash_table_create%28%29%0Avoid%20ioopm_hash_table_destroy%28ioopm_hash_table_t%20%2Aht%29%0A

Note that for some functions, there are some design decisions left to you which may change the signature.

Creation and deletion could possibly be a single step, but we separate them here to simplify the discussion. Implementing lookup before insertion might seem strange, but it is totally fine even though our initial tests for lookup are going to be very boring because it will only be able to interact with empty hash tables.

Before we begin, read the page on the CUnit test framework which is the mandated framework to use for unit testing on this course. Those instructions will give you a minimal unit test file that you can populate with tests for the hash table.

How do we test creation and destruction of a data structure?

Future tests will test the correctness of the implementation of

the data representation, but for now creation and destruction can

be tested together. Our test will create an ioopm_hash_table_t,

destroy same hash table, and we will run this test under

valgrind, to make sure that we both allocate and free

appropriately.

Here is what such a test could look like:

void test_create_destroy() { ioopm_hash_table_t *ht = ioopm_hash_table_create(); CU_ASSERT_PTR_NULL(ht); ioopm_hash_table_destroy(ht); }

void%20test_create_destroy%28%29%0A%7B%0A%20%20%20ioopm_hash_table_t%20%2Aht%20%3D%20ioopm_hash_table_create%28%29%3B%0A%20%20%20CU_ASSERT_PTR_NULL%28ht%29%3B%0A%20%20%20ioopm_hash_table_destroy%28ht%29%3B%0A%7D%0A

OK, so from a external testing perspective, we now know that the

success criteria for the implementation of

ioopm_hash_table_create() and ioopm_hash_table_destroy() is

that whatever data was created by the first is removed by the

second. Note that as tests go, this is no super great – if we

leave the definition of both functions under test empty, the tests

will pass. However, as our feature sets grow, so will our tests,

which will take care of this.

Now that we have a test for both functions, now is the time to start thinking about how to implement them. Look at the discussion in Step 0 and Step 4 from the “Classical Approach”. Then return here.

Ideally, after writing the test, you want to execute it and see

that it fails. This is in line with the SIMPLE approach (always

have a running program), and requires that we start the actual

implementation of the features by defining stubs for the functions

under test, e.g., a one-line ioopm_hash_table_create() function

that returns NULL etc. This will cause our test to fail which is

good because our goal is to make it pass! Moreover, if we can get

the test to compile, run and fail before implementing the

feature we are testing, we do not need to fix bugs both in the

test and the feature under test at the same time!

3.2 Feature 3: Looking up Values

Start by writing a stub for the function under test so we can compile and run a (failing) test:

char *ioopm_hash_table_lookup(ioopm_hash_table_t *ht, int key)

char%20%2Aioopm_hash_table_lookup%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%29%0A

Note that there are some design decisions left to you which may change the signature.

Next step is to implement support for looking up values in a hash table. This is going to be a very useful feature, not in the least for testing many other features like insertion and removal. From a testing perspective, we could try to manually construct a hash table and use it for looking up, but this is brittle and will haunt us if we ever need to change the internal representation. Instead, for now, let us as a test simply verify that a newly created hash table is completely empty.

We can draw on our knowledge of the internal representation in our choice of lookup operations. If we know that the number of bucket lists is 17, then we can easily carve up a test where we are sure to hit all the buckets. However, tempting as it may be to draw on such internal information, constants such as the number of buckets may change in the future. Unless the test uses the same constant as the hash table11, it may be better to treat the object under test as a black box. Nevertheless, here is an initial test based on trying to hit all buckets, do one wrap-around (17 should wrap to bucket 0), and dealing with negative numbers. (Are they possible? Should they be possible? How should they be handled?)

void test_lookup() { ioopm_hash_table_t *ht = ioopm_hash_table_create(); for (int i = 0; i < 18; ++i) /// 18 is a bit magical { CU_ASSERT_PTR_NULL(ioopm_hash_table_lookup(ht, i)); } CU_ASSERT_PTR_NULL(ioopm_hash_table_lookup(ht, -1)); ioopm_hash_table_destroy(ht); }

void%20test_lookup%28%29%0A%7B%0A%20%20%20ioopm_hash_table_t%20%2Aht%20%3D%20ioopm_hash_table_create%28%29%3B%0A%20%20%20for%20%28int%20i%20%3D%200%3B%20i%20%3C%2018%3B%20%2B%2Bi%29%20%2F%2F%2F%2018%20is%20a%20bit%20magical%0A%20%20%20%20%20%7B%0A%20%20%20%20%20%20%20CU_ASSERT_PTR_NULL%28ioopm_hash_table_lookup%28ht%2C%20i%29%29%3B%0A%20%20%20%20%20%7D%0A%20%20%20CU_ASSERT_PTR_NULL%28ioopm_hash_table_lookup%28ht%2C%20-1%29%29%3B%0A%20%20%20ioopm_hash_table_destroy%28ht%29%3B%0A%7D%0A

The code snippet above exemplifies a great case for using a comment. The number 18 is seemingly “magical” – it is likely not obvious to most casual readers of this code why it was chosen. A comment is a good way to explain why it was chosen, so that a future coder will know to change it if the number of buckets change (at least if they stumble across the code and read the comment).

Now that we have an initial (albeit fairly uninteresting) test for look-ups, now is the time to start thinking about how to implement the lookup function. Look at the discussion in Step 2 from the “Classical Approach”. Then return here.

3.3 Feature 4: Inserting Values

Start by writing a stub for the function under test so we can compile and run a (failing) test:

void ioopm_hash_table_insert(ioopm_hash_table_t *ht, int key, char *value)

void%20ioopm_hash_table_insert%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%2C%20char%20%2Avalue%29%0A

Note that there are some design decisions left to you which may change the signature.

Dijkstra’s classical quote about testing – that testing can only prove the presence of errors, not the absence – stems from the fact that we, in the general case, cannot (be sure to) test all possible cases. Thus, if all tests pass, we cannot claim correctness, just that “all tests pass”.

Testing is to a large extent about being clever and economic about what tests to execute. If we are going to test a linked list, for example, what length should we test? 1? 1.000? 1.000.000? Etc. The key insight is to test the cases that represent particular states in the object under test. For a linked list, for example, testing against the empty list is important, as is a list with a single element, and a list with “a couple of” elements. These typically represent the important states of a list. For example, in a list with a single element, there is no middle element, so we need one with “a couple of” elements to cover that case.

For inserting values, there are going to be two, possibly three important basic cases to test for:

- Testing with a fresh key that is not already in use

- Testing with a key that is already in use

- Testing with an invalid key (if at all possible)

When we test these cases, we test them in isolation. If we tested all together, it will be harder for us to pinpoint the location of errors. For example, maybe a bug in inserting with an existing key leaves the hash table in a corrupted state that triggers an error on the next lookup. When that error happens, we would like to be able to deduce that the error is in the implementation of insert with an existing key.

Thus, to reduce the search space for the root cause of a bug, we

test the cases 1-3 separately. After this, it makes sense to test

the interaction between the cases, e.g. testing the sequences

1;1, 1;2, 2;2, etc. And possibly longer sequences. Thus, if

the tests for 1, 2 and 1;1 passes, but the test 1;2 fails, we

know that it is the interaction between 1 and 2, and that the bug

is likely in 2 given that 1;1 worked fine.

In a nut shell, test design is about finding a small enough number of tests that they can be executed in short enough time for us to be able to run them constantly, yet large enough to cover all the important states and state transitions of the object under test, and structured in such a way that for each failing test, the search space for the root cause of the bug is as small as possible.

We can use lookup to observe the changes after insert. For example, a minimal test for case 1 above would be:

- Create a new empty hash table \(h\)

- Verify that key \(k\) is not in \(h\) using lookup

- Insert a value \(v\) with key \(k\) in \(h\)

- Use lookup to verify that \(k\) maps to \(v\)

- Destroy \(h\)

Go ahead and code up that test in this function skeleton:

void test_lookup1() { ... }

void%20test_lookup1%28%29%0A%7B%0A%20%20...%0A%7D%0A

Complement this test function with others following the logic of above. When you are done, you have defined the success criteria for the insert function. Time to write it! Look at the discussion in Step 3 from the “Classical Approach”. Then return here.

3.4 Feature 5: Removing Values

Start by writing a stub for the function under test so we can compile and run a (failing) test:

void ioopm_hash_table_remove(ioopm_hash_table_t *ht, int key)

void%20ioopm_hash_table_remove%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%29%0A

Note that there are some design decisions left to you which may change the signature.

For this test, you are on your own! You know what to do – it will be very similar to the tests above. When you are done, implement support for removal following Step 4 from the “Classical Approach”. Then jump to Step 5: Refactoring and apply that while using the tests to make sure that your refactorings do not accidentally break stuff!

3.5 Moving On…

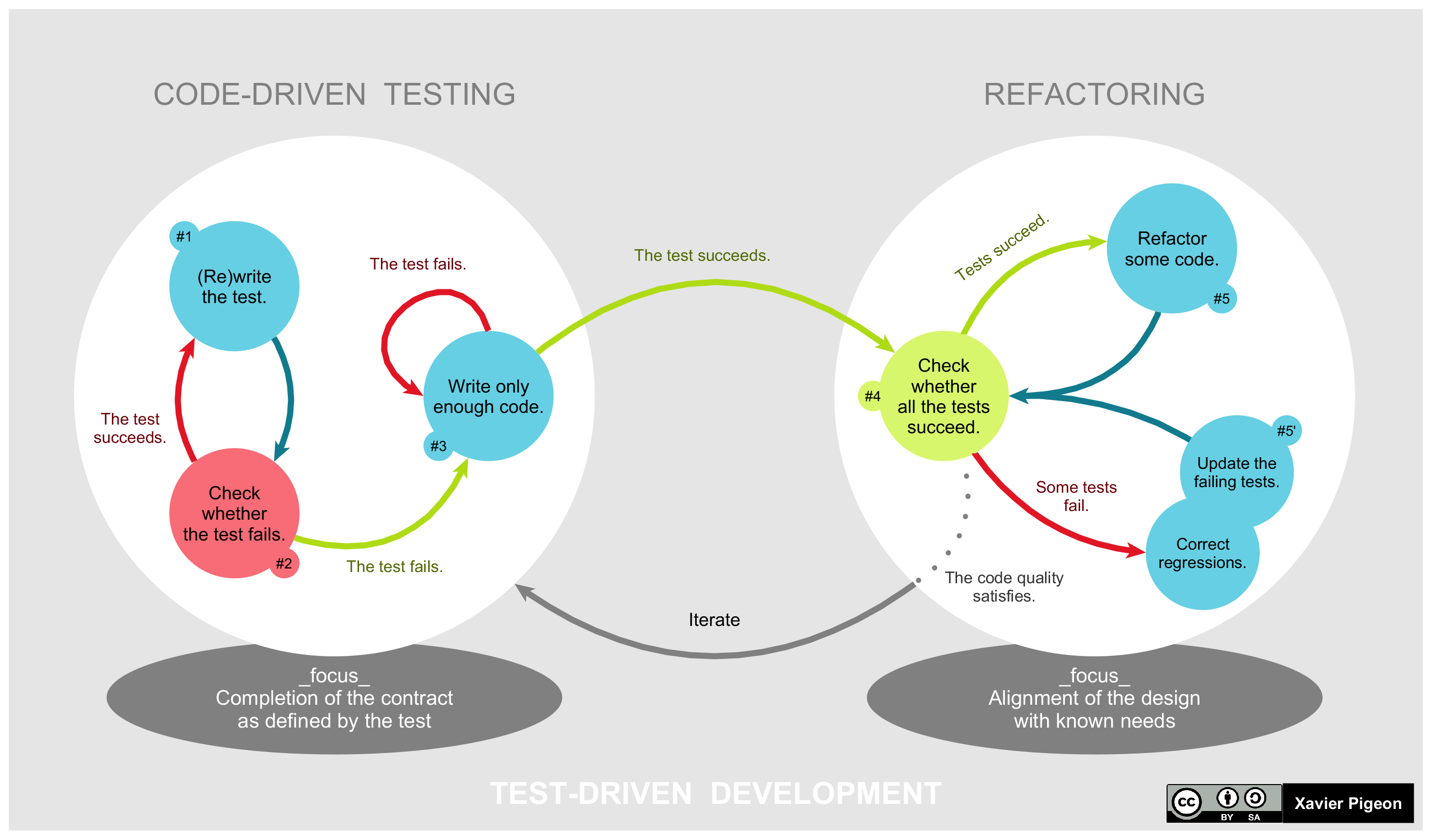

Now you know basic test-driven development! Granted, details are still a bit sketchy, but we are only on Assignment 1. This diagram shows the process of test-driven development quite clearly, especially after having completed it once.

Figure 3: The life-cycle of test-driven design (from Wikipedia)

Continue using the test-driven approach now, by jumping to Ticket #2: Utility Functions which is not written especially for a test-driven approach. That should not be needed now that you know what you are doing.

4 Right: Classical Approach

In a top-down way (starting from the public functions that a user of the hash table will see), we will start our implementation by designing, coding and testing the following features:

- Create a new, empty hash table

- Insert a new key–value mapping in a hash table

- Lookup the value for a given key

- Remove a key–value mapping from a hash table

- Delete a hash table and free its used memory

This order makes sense from a testing perspective: the first feature is a prerequisite of the following three, the second is a prerequisite of the following two (at least for interesting cases), and the third lets us see the effects of the 2nd and 4th. (In all honesty, delete should probably be made the second – but we punt on that now, because it is slightly involved.)

If we wanted to proceed in another order, we would have had to create and populate a list by hand to be able to write a reasonable test. (There is nothing wrong with that. For a lot of things in programming, there is no inherent right or wrong – this may vary from case to case and also be strongly influenced by what you perceive as natural or intuitive.)

Words of Advice Before You Start

Compile often. Initially, perhaps once for every line of code you edit. It is better to capture errors immediately and to fix small errors all the time rather than face a mountain of errors to fix later. Along the same lines, try to have a working program at all times – so that you can run it and see where it crashes.

Remember that the C compiler reads files top-down. Thus, if

function f appears before function g in the source, C does not

yet know g when you want to call it. This is solved by adding

function prototypes for private functions topside of the .c

file, and by including the corresponding .h file.

When you are compiling a program that does not have a main()

function, you need to use separate compilation by adding the

-c flag when you compile. This produces object code (e.g.

file.o) rather than an executable, and thus does not need any

main function.

When you read the output from the compiler, don’t read it from the end, but scroll up and read from the start. It is very likely, especially when you are a beginner C programmer, that later errors are caused by earlier errors that confused the C compiler. That means the later errors may be addressed by fixing the earlier errors. If you compile in Emacs rather than in the terminal, Emacs will interpret the compilers output so that if you click on an error (or move the cursor over it and hit enter), it will take you to the right place in the code immediately.

If you come across a function that you don’t know, try man

that_function on the command line on Linux or macOS. Usually you

will get lots of very useful information. Try e.g., man strncpy

now. You can also do man man to get information about how the

man pages system works.

4.1 Step 0: Creating an Empty Hash Table

Recapping the structure of the hash table from above, a hash table (for now) is simply an array of (pointers) to entries. Since we know the number of buckets statically –it is hard coded as 17 – and do not allow the number of buckets to change, we do not need to store any meta data.

We are now ready to code up the function to create the hash table.

Note the naming convention that we follow. Each public function’s

name starts with ioopm, followed by some symbol that groups the

functions in a clear way, followed by some symbol, usually a verb,

that explains what the function does. Thus, we can see directly

that the function ioopm_hash_table_create() belongs to the code

written for the ioopm course, and that it concerns – creates –

hash tables.

We noted before that, except for the array of buckets, we only store data for entries in the hash table. As the hash table is empty, all we need is an empty array.

IMPORTANT C CAVEAT

When we allocate memory in C using malloc(), C will return a

pointer to memory whose contents are unknown. They will contain

whatever bytes that happened to be in that part of memory. Thus,

after using malloc() we need to explicitly initialise the

newly allocated data.

The steps to creating a new hash table is thus:

- Allocate memory to hold 17 buckets where each bucket is

represented as a pointer to an

entry_t. - Iterate over the buckets and initialise them to

NULL. - Return a pointer to the allocated and initialised memory.

Here is a first stab of the function:

ioopm_hash_table_t *ioopm_hash_table_create() { /// Allocate space for a ioopm_hash_table_t = 17 pointers to entry_t's ioopm_hash_table_t *result = malloc(sizeof(ioopm_hash_table_t)); /// Initialise the entry_t pointers to NULL for (int i = 0; i < 17; ++i) { result->buckets[i] = NULL; } return result; }

ioopm_hash_table_t%20%2Aioopm_hash_table_create%28%29%0A%7B%0A%20%20%2F%2F%2F%20Allocate%20space%20for%20a%20ioopm_hash_table_t%20%3D%2017%20pointers%20to%20entry_t%27s%0A%20%20ioopm_hash_table_t%20%2Aresult%20%3D%20malloc%28sizeof%28ioopm_hash_table_t%29%29%3B%0A%20%20%2F%2F%2F%20Initialise%20the%20entry_t%20pointers%20to%20NULL%0A%20%20for%20%28int%20i%20%3D%200%3B%20i%20%3C%2017%3B%20%2B%2Bi%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20result-%3Ebuckets%5Bi%5D%20%3D%20NULL%3B%0A%20%20%20%20%7D%0A%20%20return%20result%3B%0A%7D%0A

There is a companion function to malloc(), called calloc(),

that initialised allocated memory by setting all its bits to zero.

The calloc() function is intented to be used to allocate arrays,

but can always be used in place of malloc(). To avoid nasty bugs

due to use of uninitialised memory, always use calloc() and

never use malloc().

Using calloc() we can rewrite the create function thus:

ioopm_hash_table_t *ioopm_hash_table_create() { /// Allocate space for a ioopm_hash_table_t = 17 pointers to /// entry_t's, which will be set to NULL ioopm_hash_table_t *result = calloc(1, sizeof(ioopm_hash_table_t)); return result; }

ioopm_hash_table_t%20%2Aioopm_hash_table_create%28%29%0A%7B%0A%20%20%2F%2F%2F%20Allocate%20space%20for%20a%20ioopm_hash_table_t%20%3D%2017%20pointers%20to%0A%20%20%2F%2F%2F%20entry_t%27s%2C%20which%20will%20be%20set%20to%20NULL%0A%20%20ioopm_hash_table_t%20%2Aresult%20%3D%20calloc%281%2C%20sizeof%28ioopm_hash_table_t%29%29%3B%0A%20%20return%20result%3B%0A%7D%0A

Note that with calloc() we need to specify how many hash

tables we want to allocate. Unless we are allocating an array of

values, this number is likely going to be 1 (like now).

4.1.1 Finish This Step

We already have a working ioopm_hash_table_create(), so we are

almost done. To finish this step, you should:

- Make sure that your code compiles with the appropriate flags turned on. Preferably using a Makefile. Once your makefile has grown a bit (and there are more files and more tests), you should use it to demonstrate Achievement U57.

- Check your code into the approprate GitHub repos. Tag your

commit with

assignment1_step0following the instructions in the previous link.

4.2 Step 1: Inserting a new (key, value) entry

This is a good time to start thinking about Achievement A1.

A first algorithm for inserting a new (key, value) entry into

our hash map could involve the following steps:

1. Find the right bucket for key 2. Insert the (key, value) into the bucket

This however fails to address the case when there is already

another entry, (key, other_value), in the hash map. A revised

algorithm could thus be:

1. (as before) 2. Search the entries in the bucket for an entry with key key 2.1 If found: replace the value of that entry with value 2.2 Otherwise: insert the (key, value) into the bucket

This seems like a working algorithm (right?), so let’s go ahead and code it up. Here:

void ioopm_hash_table_insert(ioopm_hash_table_t *ht, int key, char *value) { /// Calculate the bucket for this entry int bucket = key % 17; /// Get a pointer to the first entry in the bucket entry_t *first_entry = ht->buckets[bucket]; entry_t *cursor = first_entry; while (cursor != NULL) { if (cursor->key == key) { cursor->value = value; return; /// Ends the whole function! } cursor = cursor->next; /// Step forward to the next entry, and repeat loop } /// We only reach this point if we managed to search through the whole /// bucket without finding an entry with key as key /// Create an object for the new entry entry_t *new_entry = calloc(1, sizeof(entry_t)); /// Set the key and value fields to the key and value new_entry->key = key; new_entry->value = value /// Make the first entry the next entry of the new entry new_entry->next = first_entry; /// Make the new entry the first entry in the bucket ht->buckets[bucket] = new_entry; }

void%20ioopm_hash_table_insert%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%2C%20char%20%2Avalue%29%0A%7B%0A%20%20%2F%2F%2F%20Calculate%20the%20bucket%20for%20this%20entry%0A%20%20int%20bucket%20%3D%20key%20%25%2017%3B%0A%20%20%2F%2F%2F%20Get%20a%20pointer%20to%20the%20first%20entry%20in%20the%20bucket%0A%20%20entry_t%20%2Afirst_entry%20%3D%20ht-%3Ebuckets%5Bbucket%5D%3B%0A%0A%20%20entry_t%20%2Acursor%20%3D%20first_entry%3B%0A%20%20while%20%28cursor%20%21%3D%20NULL%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20if%20%28cursor-%3Ekey%20%3D%3D%20key%29%0A%20%20%20%20%20%20%20%20%7B%0A%20%20%20%20%20%20%20%20%20%20cursor-%3Evalue%20%3D%20value%3B%0A%20%20%20%20%20%20%20%20%20%20return%3B%20%2F%2F%2F%20Ends%20the%20whole%20function%21%0A%20%20%20%20%20%20%20%20%7D%0A%0A%20%20%20%20%20%20cursor%20%3D%20cursor-%3Enext%3B%20%2F%2F%2F%20Step%20forward%20to%20the%20next%20entry%2C%20and%20repeat%20loop%0A%20%20%20%20%7D%0A%0A%20%20%2F%2F%2F%20We%20only%20reach%20this%20point%20if%20we%20managed%20to%20search%20through%20the%20whole%0A%20%20%2F%2F%2F%20bucket%20without%20finding%20an%20entry%20with%20key%20as%20key%0A%0A%20%20%2F%2F%2F%20Create%20an%20object%20for%20the%20new%20entry%0A%20%20entry_t%20%2Anew_entry%20%3D%20calloc%281%2C%20sizeof%28entry_t%29%29%3B%0A%20%20%2F%2F%2F%20Set%20the%20key%20and%20value%20fields%20to%20the%20key%20and%20value%0A%20%20new_entry-%3Ekey%20%3D%20key%3B%0A%20%20new_entry-%3Evalue%20%3D%20value%0A%20%20%2F%2F%2F%20Make%20the%20first%20entry%20the%20next%20entry%20of%20the%20new%20entry%0A%20%20new_entry-%3Enext%20%3D%20first_entry%3B%0A%20%20%2F%2F%2F%20Make%20the%20new%20entry%20the%20first%20entry%20in%20the%20bucket%0A%20%20ht-%3Ebuckets%5Bbucket%5D%20%3D%20new_entry%3B%0A%7D%0A

Now, this solves the problem, and the code isn’t too bad. We could however refactor the code a little – for example, we could make a separate function for creating a new entry, and possibly also for searching through a bucket. Let’s pretend that we have written such functions (aka as cheating in the SIMPLE methodology), so that we can explore what such a refactored code could look like before we determine if it is worth the effort. Here:

void ioopm_hash_table_insert(ioopm_hash_table_t *ht, int key, char *value) { /// Calculate the bucket for this entry int bucket = key % 17; /// Search for an existing entry for a key entry_t *existing_entry = find_entry_for_key(ht->buckets[bucket], key); if (existing_entry != NULL) /// i.e., it exists { existing_entry->value = value; } else { /// Get a pointer to the first entry in the bucket entry_t *first_entry = ht->buckets[bucket]; /// Create a new entry entry_t *new_entry = entry_create(key, value, first_entry); /// Make the new entry the first entry in the bucket ht->buckets[bucket] = new_entry; } }

void%20ioopm_hash_table_insert%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%2C%20char%20%2Avalue%29%0A%7B%0A%20%20%2F%2F%2F%20Calculate%20the%20bucket%20for%20this%20entry%0A%20%20int%20bucket%20%3D%20key%20%25%2017%3B%0A%20%20%2F%2F%2F%20Search%20for%20an%20existing%20entry%20for%20a%20key%0A%20%20entry_t%20%2Aexisting_entry%20%3D%20find_entry_for_key%28ht-%3Ebuckets%5Bbucket%5D%2C%20key%29%3B%0A%0A%20%20if%20%28existing_entry%20%21%3D%20NULL%29%20%2F%2F%2F%20i.e.%2C%20it%20exists%0A%20%20%20%20%7B%0A%20%20%20%20%20%20existing_entry-%3Evalue%20%3D%20value%3B%0A%20%20%20%20%7D%0A%20%20else%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%2F%2F%2F%20Get%20a%20pointer%20to%20the%20first%20entry%20in%20the%20bucket%0A%20%20%20%20%20%20entry_t%20%2Afirst_entry%20%3D%20ht-%3Ebuckets%5Bbucket%5D%3B%0A%20%20%20%20%20%20%2F%2F%2F%20Create%20a%20new%20entry%0A%20%20%20%20%20%20entry_t%20%2Anew_entry%20%3D%20entry_create%28key%2C%20value%2C%20first_entry%29%3B%0A%20%20%20%20%20%20%2F%2F%2F%20Make%20the%20new%20entry%20the%20first%20entry%20in%20the%20bucket%0A%20%20%20%20%20%20ht-%3Ebuckets%5Bbucket%5D%20%3D%20new_entry%3B%0A%20%20%20%20%7D%0A%7D%0A

The code above involves two functions I just made up:

find_entry_for_key() that takes a hash table and a key and

returns a pointer to an entry (or NULL if no such entry exists),

and entry_create() that creates a new entry with a given key,

value and next pointer.

Note how this lifts the abstraction of the code – someone that comes across this code can immediately understand what the code is doing, without having to understand exactly how it is done. Actually – the description above is pretty close to the bullet list description of the algorithm that we had just before we started coding. Strive for such clarity in your programs always!

Many times, it pays off to try to write the code top-down, i.e., by pretending that the key functions you need exist, and coding at decreasing level of abstraction. This causes you to initially specify the logic at a high-level, e.g., find an entry for a given key and create a new entry, and gradually drop down to a lower level (possibly applying the same strategy). This is a form of divide and conquer approach to problem solving, which is key to the possibility of solving big problems!

Before we code up the find_entry_for_key() and entry_create()

functions, let us consider one more aspect of the code. We always

insert new entries at the front of a bucket’s list (i.e., a

prepend). This means that entries in buckets are unsorted, which

in turn means that we must always search the whole list before we

can determine that it does not contain an entry with the key we

are looking for. If we tweaked our insertion so that the list was

ordered by keys, searching for a key \(k_1\) can terminate as soon as

we see a key \(k_2\) such that \(k_2 < k_1\). This seems like a good

choice if buckets have lots of entries.

This is an optimisation of the find operation. We can (and will) discuss later the case against premature optimisation (and whether or not this is an instance of it). For now, assuming that we want to implement this optimisation, it is important that we can do so without making it too costly to insert entries in a way that maintains the desired order. (Note that always inserting at the head of the list is very fast, and we will lose this now. Will losing the \(O(1)\) insertion to speed up search be an optimisation on the whole? If you have a moment to spare, this is an interesting question worth pondering. Also ask yourself if there is more information that we need to answer the question.)

Speaking of optimisation, we would like to also avoid e.g. using one search operation to find an existing entry with a given key, and failing to do so use another search to find the right place to insert the new entry.

After some thinking (and with experience, you’ll learn to see

these kinds of things immediately) we note that the two find

operations we have been discussing are strikingly similar. Let

(key, value) be the entry we are trying to add. If the first

search comes across an entry e such that e = (\(k_1\), \(v_1\))

such that \(k_1 > key\), then not only can we conclude that there is

no entry with key \(key\), but that (\(key\),\(value\)) should be added

right before e. Thus, with a little bit of cleverness, a single

find can serve both purposes with a single function and a single

function call. .

To this end, we can change the find_entry_for_key() function –

which is super cheap, as we haven’t written it yet! – to

find_previous_entry_for_key() which returns the entry before

the one we are looking for, or, in case such an entry does not

exist, the entry whose next pointer should be pointing to the

entry once we have inserted it. (Please read this paragraph

several times to make sure you get it.)

How can we distinguish between the two possible cases for

find_previous_entry_for_key()?

Here is a first (but buggy – do you see it!??) draft of the insert function:

void ioopm_hash_table_insert(ioopm_hash_table_t *ht, int key, char *value) { /// Calculate the bucket for this entry int bucket = key % 17; /// Search for an existing entry for a key entry_t *entry = find_previous_entry_for_key(ht->buckets[bucket], key); entry_t *next = entry->next; /// Check if the next entry should be updated or not if (next != NULL && next->key == key) { next->value = value; } else { entry->next = entry_create(key, value, next); } }

void%20ioopm_hash_table_insert%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%2C%20char%20%2Avalue%29%0A%7B%0A%20%20%2F%2F%2F%20Calculate%20the%20bucket%20for%20this%20entry%0A%20%20int%20bucket%20%3D%20key%20%25%2017%3B%0A%20%20%2F%2F%2F%20Search%20for%20an%20existing%20entry%20for%20a%20key%0A%20%20entry_t%20%2Aentry%20%3D%20find_previous_entry_for_key%28ht-%3Ebuckets%5Bbucket%5D%2C%20key%29%3B%0A%20%20entry_t%20%2Anext%20%3D%20entry-%3Enext%3B%0A%0A%20%20%2F%2F%2F%20Check%20if%20the%20next%20entry%20should%20be%20updated%20or%20not%0A%20%20if%20%28next%20%21%3D%20NULL%20%26%26%20next-%3Ekey%20%3D%3D%20key%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20next-%3Evalue%20%3D%20value%3B%0A%20%20%20%20%7D%0A%20%20else%0A%20%20%20%20%7B%0A%20%20%20%20%20%20entry-%3Enext%20%3D%20entry_create%28key%2C%20value%2C%20next%29%3B%0A%20%20%20%20%7D%0A%7D%0A

If you added this to hash_table.c and called the method in

main() (you should!) in the spirit of always making sure that

you have a runnable program, you would see it blow up! Why?

Because we have forgotten about an important edge case, namely

that the way we have coded up the insertion case requires that

there is always a preceding entry – which is not true for the

first entry in the bucket’s list. Doh! (Forgetting a case is a

typical programmer mistake, and you should expect to make it many

times more.)

Luckily, there is an easy fix to fix this error – which may not be intuitive unless you have seen it before: initialise each bucket’s list to start with an entry that we never use except as the previous node to the actual first entry. This trick is common and such an entry is commonly referred to as a “dummy” or the “sentinel”.

Here are two ways to add a dummy entry to the buckets’ lists:

- Add a

forloop toioopm_hash_table_create()that callsentry_create(0, NULL, NULL)and stores the result as the start of each bucket - Change the representation of

entry_t *buckets[17]inhash_table_ttoentry_t buckets[17].

I personally prefer the 2nd alternative (there is an even better

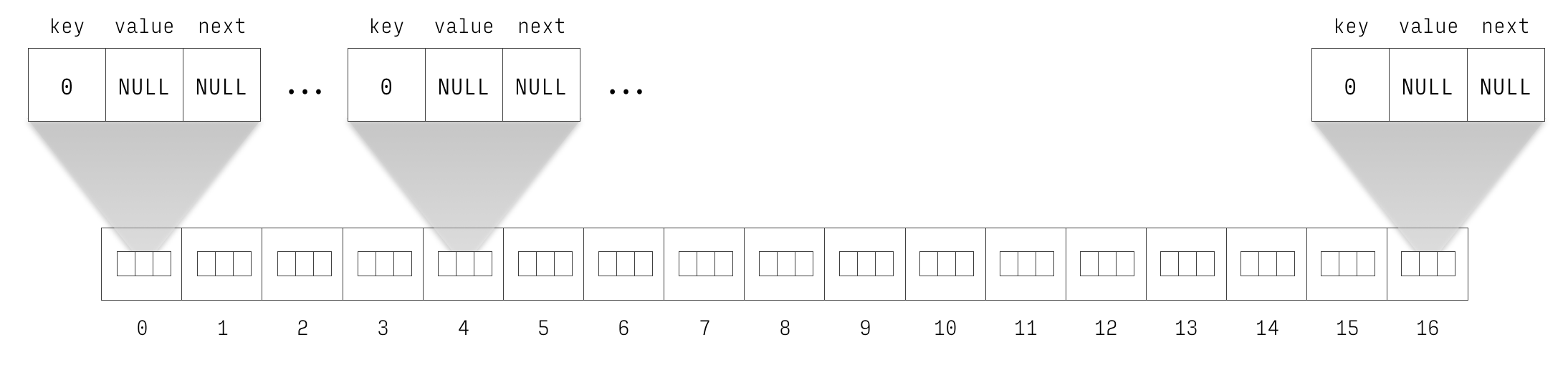

way which we will return to later). Figures 4 and 5

show the changes to the hash table structure in memory. For

clarity, the entry with key 4 is the previous entry of the entry with key 208.

Furthermore, its previous entry of the entry is the dummy (with

key 0, value NULL and next pointer pointing to it.)

Figure 4: Newly initialised hash table with inlined dummy entries.

Figure 5: Newly initialised hash with inlined dummy entries with 4 \(\mapsto\) bar, 17 \(\mapsto\) foo and 208 \(\mapsto\) baz. The entry with key 4 is the previous entry of the entry with key 208.

To make this work, one more change is needed – which is subtle

but important. In the call to find_previous_entry_for_key(),

change ht->buckets[bucket] to &ht->buckets[bucket]. This

passes in the address to the entry in the bucket rather than

passes in a copy of the entire entry. This is a classic issue of

pointer semantics vs value semantics (aka pass by pointer vs. pass

by value).

This implementation represents a classic trade-off: we pay a few extra bytes of memory for a nicer and cleaner implementation. This is almost always worth it, so long as the costs are moderate. If we have a large number of buckets, and a small number of entries in each bucket, the ratio of dummies per entry will be high. As long as the number of buckets is fixed to 17, the overhead is 17 entries, which is negligible on a modern machine.

4.2.1 Finish This Step

Finishing this step means finishing the implementation of

ioopm_hash_table_insert(). That entails the following:

- Implement

find_previous_entry_for_key()following one of the two suggestions from above for how to add a dummy node. - Implement

entry_create(). - Implement a couple of tests for

ioopm_hash_table_insert()following the instructions in Feature 4: Inserting Values. - Make sure that your code compiles with the appropriate flags turned on. Preferably using a Makefile.

- Make sure that the code passes your tests.

- Check your code into the appropriate GitHub repos. Tag your

commit with

assignment1_step1.

Hints: For 1. and 2., you have plenty of help from the initial

stabs at implementing ioopm_hash_table_insert(). For 3., there

is plenty of good stuff in the section on test-driven approach to

implementation.

Note that (at least for now) both find_previous_entry_for_key()

and entry_create() are private functions which are only meant to

be used from inside the hash table. That means both should be

declared static, like so:

static entry_t *entry_create(...TODO...) { ...TODO... }

static%20entry_t%20%2Aentry_create%28...TODO...%29%0A%7B%0A%20%20...TODO...%0A%7D%0A

4.3 Step 2: Looking Up the Value for a Key

The code for insertion already required that

we write some code for finding an entry for a given key. We were

diligent enough to refactor our code to extract the

searching code as a function. That will immediately pay off

because we can reuse that function in the implementation of

ioopm_hash_table_lookup() that looks up the value for a given

key.

Before you look at the code below, take a few seconds to think of

how you would implement ioopm_hash_table_lookup() using the

find_previous_entry_for_key() function.

Here is one possible implementation:

char *ioopm_hash_table_lookup(ioopm_hash_table_t *ht, int key) { /// Find the previous entry for key entry_t *tmp = find_previous_entry_for_key(ht->buckets[key % 17], key); entry_t *next = tmp->next; if (next && next->key == key) { /// If entry was found, return its value... return next->value; } else { /// ... else return NULL return NULL; /// hmm... } }

char%20%2Aioopm_hash_table_lookup%28ioopm_hash_table_t%20%2Aht%2C%20int%20key%29%0A%7B%0A%20%20%2F%2F%2F%20Find%20the%20previous%20entry%20for%20key%0A%20%20entry_t%20%2Atmp%20%3D%20find_previous_entry_for_key%28ht-%3Ebuckets%5Bkey%20%25%2017%5D%2C%20key%29%3B%0A%20%20entry_t%20%2Anext%20%3D%20tmp-%3Enext%3B%0A%0A%20%20if%20%28next%20%26%26%20next-%3Ekey%20%3D%3D%20key%29%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%2F%2F%2F%20If%20entry%20was%20found%2C%20return%20its%20value...%0A%20%20%20%20%20%20return%20next-%3Evalue%3B%0A%20%20%20%20%7D%0A%20%20else%0A%20%20%20%20%7B%0A%20%20%20%20%20%20%2F%2F%2F%20...%20else%20return%20NULL%0A%20%20%20%20%20%20return%20NULL%3B%20%2F%2F%2F%20hmm...%0A%20%20%20%20%7D%0A%7D%0A

The reason for the hmm comment above is that next->value could

also legally be NULL – nothing restricts a user from using

NULL as a value for a key. Thus, using NULL as a marker

denoting failure isn’t a very good idea.

Thinking a bit more mathematically, lookup of a key in a hash table is a partial function because a hash table is not likely to contain every possible key (quite the opposite). C does not have support for partial functions, so we need to lift the function to a total function that simply maps all unmapped keys to some value. It is desirable that this value is not in the domain of the partial function, or we won’t always be able to tell whether we have successfully looked up the value for a key or not.

Someone could argue that NULL should not be a legal string

value, but that argument is going to fall over for say integer

values or float values. Granted, we aren’t supporting these yet,

but in the back of our minds, we know we conveniently forgot about

those to simplify the problem to make progress, in exchange to not

forget them indefinitely.

So, if we cannot use NULL (or any other special value), what are

our options? Here are some:

- Let the function return two values, adding one boolean value

that indicates success (accessing the other returned value is

only alloed if success is

true). - Add a separate public function for checking whether a key is mapped to a value, and only accept valid keys, and terminating the program using e.g., an assertion if an invalid key is detected.

- Use some other means of signalling an error to the user.

We now go through these approaches in more detail.

This aspect of the assignment is a good fit for Achievement I24.

4.3.1 Returning More than a Single Value from a Function

Many modern programming languages support the notion of tuples,

which allow collecting multiple values in a group. If we were

programming e.g., Haskell or Python, we could do something like

return (success, value). C, however, does not support tuples. It

we want to return multiple values using the return statement, we

must create a special struct for doing so. For example, we could

define a struct option for this very purpose:

typedef struct option option_t; struct option { bool success; char *value; };

typedef%20struct%20option%20option_t%3B%0Astruct%20option%0A%7B%0A%20%20bool%20success%3B%0A%20%20char%20%2Avalue%3B%0A%7D%3B%0A

Now we can change the return type of ioopm_hash_table_lookup()

to option_t, and update the return statements: